Key Takeaways

- Three deployment architectures with different risk profiles. Laptop, on-network, enterprise cloud

- OAuth 2.0 with MFA on the integration's identity is the safer authentication path

- Four MCP risk categories deserve separate treatment. Credentials, permissions, implementation flaws, prompt injection

- Six enterprise paths to keep AI inside your security perimeter, including Zero Data Retention

- SOX and SOC 2 audit questions are coming. Design controls alongside the integration, not after

Why I'm writing this

A couple of weeks ago I published a video titled How to Connect Claude AI to Oracle EPM Cloud | MCP + REST APIs. That's where I open-sourced an MCP server that lets Claude talk to Oracle EPM through the REST APIs. The response was strong. A lot of finance and EPM folks reached out.

This past Thursday I followed up with How to Set Up an AI Agent for Oracle EPM Cloud, Step by Step, walking through the actual setup.

The most common follow-up question, asked many ways: will my data be safe if I do this?

It's the right question to ask. And it needs more than a one-line reply. So this article is the long-form version. It's the first of an ongoing series I'll keep updating as the Oracle EPM platform evolves, the MCP ecosystem matures, and I learn more from real client environments.

A note up front. I built and open-sourced the Oracle EPM MCP server this article refers to. The questions in the title come straight from finance leaders, EPM peers, and Oracle ACEs who reached out in the comments and DMs. What follows is what I've learned so far, drawn from building the server, reading the security research, and working through these questions with the community. I'll keep updating it as I take this into real client environments and see what actually goes wrong.

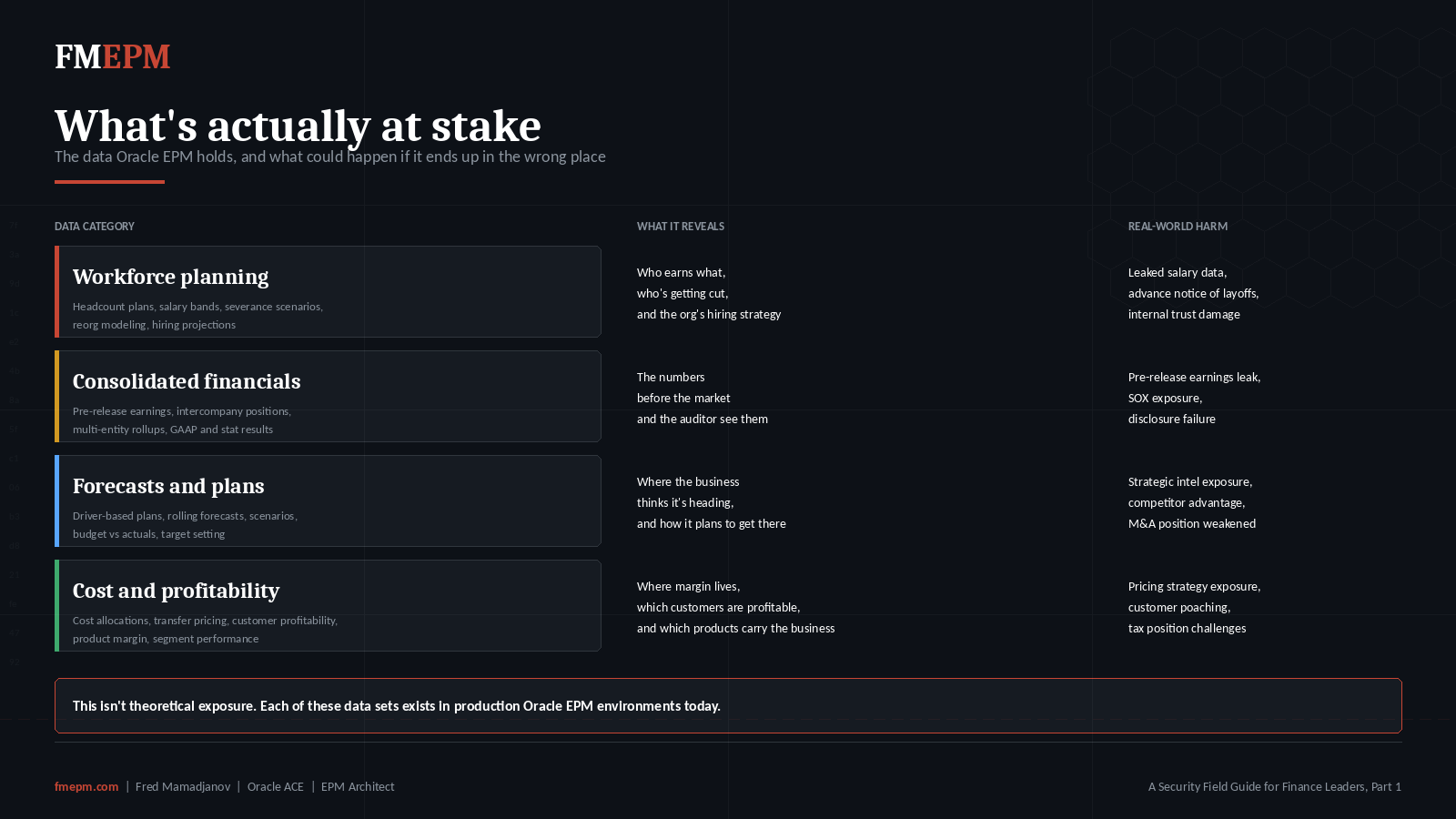

What's actually at stake

Oracle EPM Cloud holds the most sensitive numbers in your business.

Workforce planning data, with salaries, headcount plans, and severance scenarios. Consolidated financials before they're released. Pre-release forecasts that nobody outside Finance is supposed to see yet. Driver-based plans that map directly to strategy. Cost allocations that tell you where margin lives. Tax provisioning. Reorg modeling.

When you connect AI to Oracle EPM, you're not adding another reporting tool. You're connecting software to numbers that can cause real damage if they leak. Salary data in a workforce plan. Pre-release earnings. Compensation analysis sitting on a server you don't fully control.

The risk profile is different from connecting AI to a customer support tool. So the AI security controls have to be different too.

This is not me arguing against using AI with Oracle EPM. I build these integrations. The productivity gains are real, especially for close cycles, variance commentary, and forecast workflows. But the gains only matter if the controls are real too.

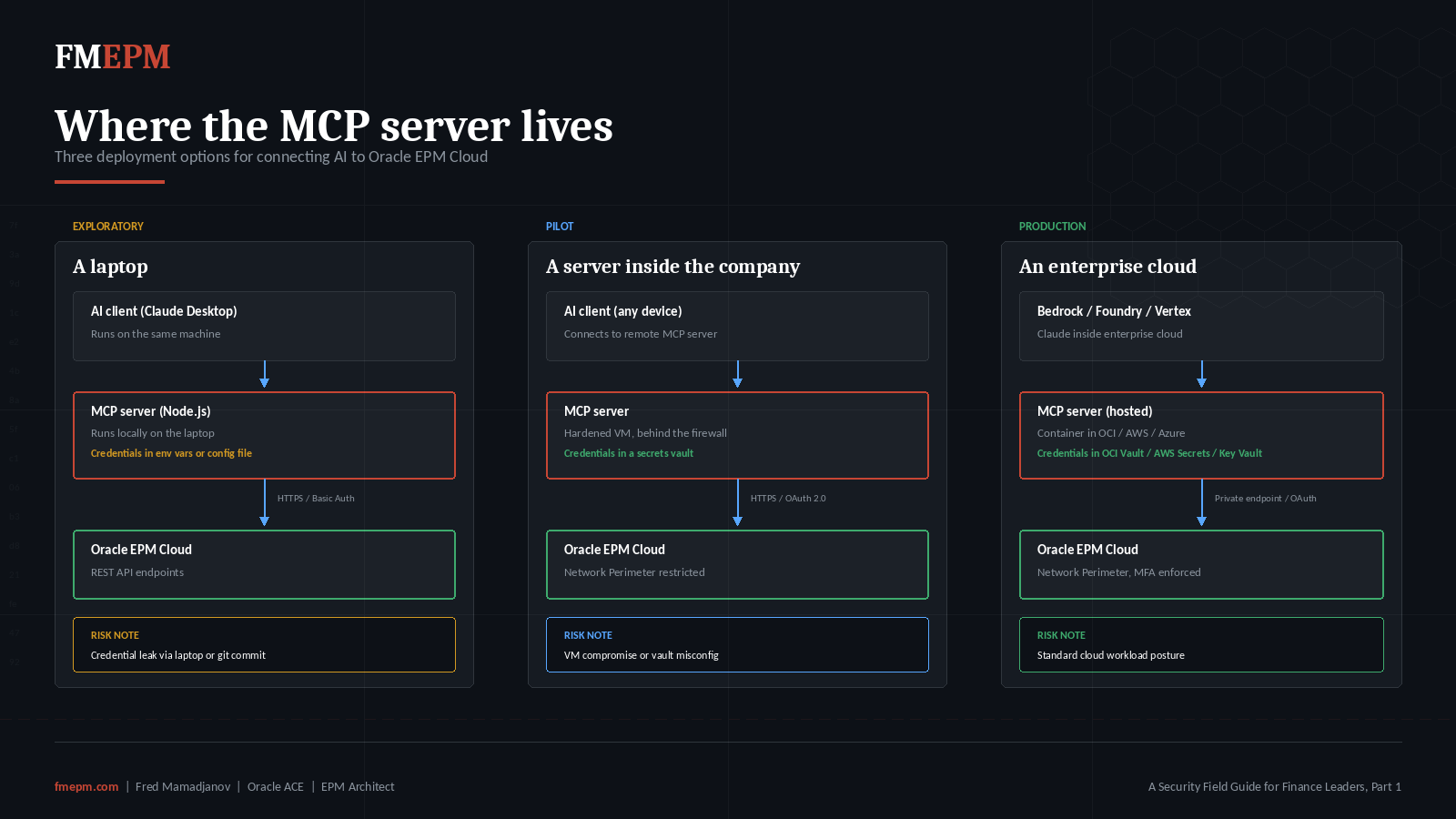

Where the MCP server actually lives

Before talking about controls, it helps to know exactly where the MCP server sits in your environment. The controls only make sense once you can picture what they're protecting.

In a typical Oracle EPM AI integration today, there's a small piece of software called an MCP server sitting between the AI assistant (Claude, for example) and Oracle EPM. The MCP server holds the connection details to Oracle EPM and exposes specific actions the AI is allowed to take, for example listing applications, running business rules, or pulling substitution variables.

That MCP server can run in three places. Each has a different security profile.

Option 1: A laptop. The MCP server runs on the same machine as the AI assistant. This is the typical setup for someone trying it out for the first time or running a personal experiment. Credentials sit on that laptop in a config file or as environment variables.

Option 2: A server inside the company network. The MCP server runs behind the company firewall, and credentials are stored in a secrets manager rather than a config file.

Option 3: An enterprise cloud environment. OCI, AWS, or Azure. The MCP server runs as a hosted workload in the same cloud the company already trusts for other workloads. Credentials live in a proper secret store like OCI Vault, AWS Secrets Manager, or Azure Key Vault.

From what I've seen, most experiments today start in Option 1. Each option calls for different controls, so let's walk through them separately.

A quick word on credentials and tokens

Before going further, let's clear up some terminology since it shows up everywhere below.

A password is what you and I think of: a string you type in to prove who you are. When a piece of software needs to log in to Oracle EPM, it has to send the username and password somewhere too. The simplest method, called Basic Authentication, sends the username and password together in every request, encoded in a format called base64. Base64 isn't encryption. It's a way to package text so it can travel cleanly over the network. Anyone who reads the request can decode it back to your password. So if a config file or log file leaks, your credentials leak with it.

A token is a different idea. The software trades the username and password once for a short-lived pass, and then uses the pass for a while. Think of it like a hotel key card. You show your ID at the front desk once, you get a key card, and the key card opens your room for a limited time. If you lose the key card, the desk can deactivate it without changing your ID.

Oracle EPM supports two kinds of tokens. An access token is the actual key card. It's good for one hour by default. A refresh token is a longer-lived voucher you use to ask for a new access token. It's good for seven days by default. Both numbers are configurable.

The official protocol Oracle uses is called OAuth 2.0. Oracle's own documentation recommends OAuth over Basic Authentication for the reasons above.

One thing worth clarifying for finance leaders: enabling MFA on the integration's identity does not mean someone has to approve a phone notification every time the AI runs. The MFA prompt happens once, during the initial OAuth setup. After that, the integration uses the refresh token to silently get new access tokens. Automation runs without human prompts. MFA only re-engages if the refresh token expires and a new one has to be issued.

Oracle's documentation on this:

- REST API authentication with OAuth 2 (Oracle Cloud EPM)

- Use of OAuth 2 Tokens for REST APIs and EPM Automate

That's the foundation. Now let's talk about the actual risks.

The four risk categories worth separating

Public discussion of MCP security tends to mash everything together. For Oracle EPM specifically, four categories deserve their own treatment because the fixes are different for each.

- Credential and token risk

- Permissions on the Oracle EPM side

- Implementation flaws in the MCP server itself

- Indirect prompt injection (real, but situational)

There's also a fifth concern that comes up often in conversations with finance leaders. Where does my data physically go when the AI processes it? I'll cover that one separately at the end, because it's not really an MCP problem. It's an AI platform decision.

Let's take each in turn.

Risk 1: Credentials and tokens

Credentials are typically the first thing to go wrong in a new integration. The credential or token has to live somewhere on the client side, and if that "somewhere" leaks, the attacker has the same access the integration has.

Three things help.

Multi-Factor Authentication (MFA). When MFA is enabled on the user account that owns the AI integration, Basic Authentication stops working. The integration has no choice but to use OAuth 2.0. Enabling MFA is one of the simplest ways to force the integration onto the safer protocol.

Rotation. OAuth tokens expire on their own. If a token leaks, the damage window is short by default. To stay useful, the stolen token has to be re-stolen frequently, which is much harder than stealing it once.

Where the credential lives. Plain text in a config file is the riskiest spot. A secrets vault inside a controlled environment is a much better starting point. The vault stores the credential in encrypted form and only releases it to the integration when needed, which means a leaked file or log doesn't leak the credential itself. This is a basic hygiene step that's often skipped in early experiments.

Picking a specific vault and a specific rotation cadence comes down to what your company already runs and what your change management process looks like. Get those decisions made before the integration goes anywhere near production.

Risk 2: Permissions on the Oracle EPM side

Oracle EPM gives you four predefined functional roles to control access: Service Administrator, Power User, User, and Viewer. It's tempting to grant Service Administrator to a new AI integration because it works on the first try. The downside is obvious. If that account leaks, the attacker has full admin rights to the application.

There's a better path. Oracle has been adding granular roles to Cloud EPM through Application Roles in Access Control. These let you give a user just enough access to do a specific job, on top of a lower predefined role. For example, you can give someone the Viewer predefined role and then add the Manage Reports application role so they can design reports without giving them Power User across the whole environment. As of the 26.01 update, Oracle is officially aligning the terminology: identity-domain roles are now called Application Roles, and business-process-specific roles are called Granular Roles in the documentation.

For Oracle EPM AI integrations, my starting recommendations are:

For a read-only AI assistant (variance explanation, dashboard reads, ad-hoc queries): start with the Viewer or User predefined role and add the specific Granular Roles needed for the data domain.

For an AI assistant that writes (running business rules, loading data, updating substitution variables): start with Power User and limit the artifact-level permissions to what the use case actually needs.

Service Administrator should be reserved for the actual humans who administer the application. Not the AI.

A real Oracle EPM constraint to be aware of: the REST APIs don't yet offer per-tool authorization the way OCI IAM does for cloud resources. You can't issue a token that's allowed to "run business rules but not export data." Permissions are scoped through the user's role and user groups, not through the API call itself. So in practice you scope by creating a dedicated user account per use case rather than trying to enforce fine-grained tool-level controls inside the MCP server.

Oracle's documentation on this:

- Understanding Predefined Roles

- Mapping Granular Roles with Predefined Roles

- Authentication with OAuth 2 (Oracle Cloud EPM). The OAuth setup is registered to a specific Oracle EPM user identity. That user identity is what the AI integration runs as, and what shows up in every audit log entry.

A "dedicated user identity" is a regular Oracle EPM user account set up specifically for the integration. Think of it as an "AI admin user." It's not a human user. OAuth tokens are issued against that account, and every action the AI takes shows up in the log under that name.

Risk 3: Implementation flaws in the MCP server

The MCP server is a piece of software. Like any software, it can have bugs. Some bugs are minor. Some are security issues.

A CVE (Common Vulnerabilities and Exposures) is a public ID assigned to a known security flaw in a piece of software. When researchers find a vulnerability and disclose it responsibly, it gets a CVE number, gets logged in a public database, and the maintainer either patches it or publishes a workaround.

Here's what we know so far. The MCP ecosystem has produced a wave of CVEs through 2025 and into 2026. The protocol is new, the rate of new servers being published is high, and security teams are still building the muscle to evaluate them.

The clearest enterprise-aimed framing I've read is Red Hat's Understanding security risks and controls in the Model Context Protocol. It's short, written for security teams who actually have to make decisions, and I recommend it as a starting point. OX Security's research on the systemic flaw in Anthropic's MCP SDKs goes deeper. Their report ties multiple recent CVEs (CVE-2025-49596, CVE-2025-54136, CVE-2026-22252) back to a single design decision in Anthropic's official SDKs. Because that decision sits at the protocol level, the same flaw shows up across many MCP implementations rather than being isolated to one project.

Two categories of bug come up often enough that finance teams should know them by name:

Path traversal. This is when a piece of software lets an attacker reach files it shouldn't, by tricking it into reading from a different directory than intended. Imagine a librarian who's supposed to fetch books from one shelf but can be tricked into wandering into the back office.

Command injection. This is when a piece of software builds an operating-system command from user input without checking the input first. The attacker sneaks in extra commands. The software runs them. The fix is to never construct OS commands from untrusted input, full stop.

Both have CVE numbers attached and both have been documented in real production MCP servers. Neither is theoretical.

So how should a finance team think about which MCP server to trust? A few guideposts:

If the server is open source, you can read the code yourself, or ask someone who can. Three things matter when reviewing the source: how it interacts with the operating system, how it handles file inputs from the AI, and where it sends network traffic. Each of those is a place where the kinds of bugs above tend to surface, and each one deserves a defensible answer before the server goes near production data.

Pin a specific version. When you pick a version of the MCP server that you've reviewed, lock your environment to that exact version. Don't let it auto-update. If a new version comes out, treat it like any other change to a production integration: review it, test it, then roll it out.

If the server is closed source, you can't read the code. In that case, ask the vendor for a security review report, or have your own security team do an assessment before connecting it to your environment. A security review is a structured walk-through of the code's behavior to identify unsafe patterns. Most enterprise software vendors are used to this request.

A specific note on MCP marketplaces. A lot of MCP servers are now distributed through public directories. Research from OX Security showed that 9 of 11 major MCP marketplaces accepted poisoned submissions during their testing. That doesn't mean every marketplace server is malicious. It means the directory model doesn't yet have strong trust signals built in, and a finance team shouldn't treat "found it on a marketplace" as endorsement.

For my own open-source server, the public version on GitHub is the one I built for learning and personal experiments. A production deployment running close cycles needs a different setup. The audit layer and the secret management have to be designed for production from the start. That part is always a customer-specific conversation.

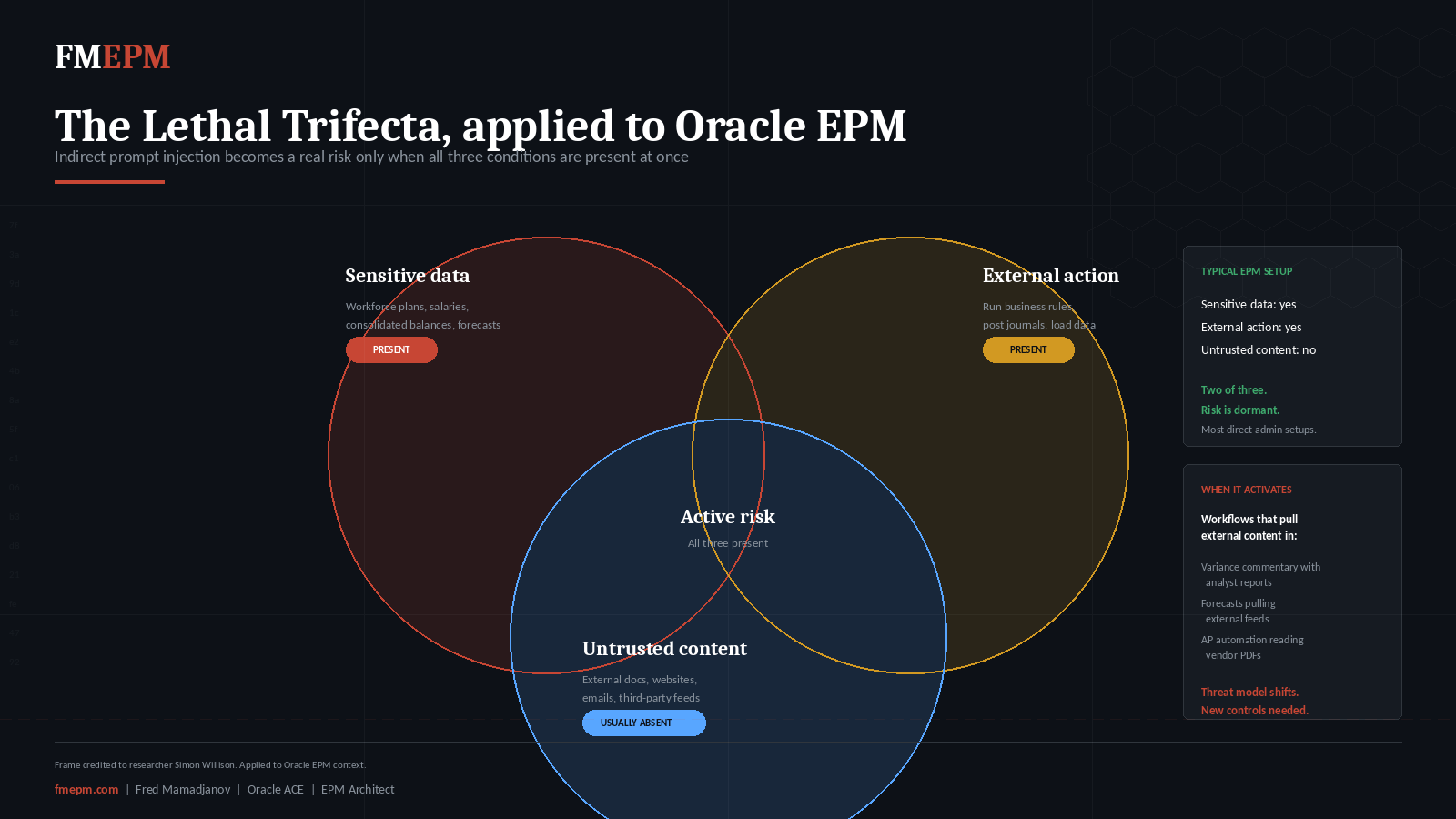

Risk 4: Indirect prompt injection

Of all the risks in this article, this one gets the most security-press coverage and is the least relevant to typical Oracle EPM AI setups today. I'm including it anyway because the moment teams expand the workflow, it becomes relevant fast.

What it actually is. Indirect prompt injection is when an attacker hides instructions inside content that the AI later reads. The AI can't tell the difference between content it was asked to summarize and instructions buried inside that content. So it follows the hidden instructions.

A useful frame for non-technical readers comes from researcher Simon Willison, whose writing on this is the clearest explanation I've found. He calls it the lethal trifecta. An AI agent becomes vulnerable when it has all three of these at once: access to sensitive data, exposure to untrusted content, and the ability to take action on something external. Take any one of the three away, and the risk drops sharply.

For a typical Oracle EPM MCP setup today, look at where the trifecta sits.

- Sensitive data: yes (workforce plans, consolidated balances, forecasts)

- Ability to take action: yes (run rules, load data, update substitution variables)

- Untrusted content: usually no. The AI is reading a finance person's prompts and Oracle EPM's own API responses. That's the loop

Two of three. The risk is dormant in this configuration.

When it activates is the moment teams start chaining the EPM agent to external content. The AI starts reading commentary from outside the company. The AI summarizes a vendor's PDF. The AI ingests data from a third-party feed. Now the third condition is present, and the risk model changes.

The right response isn't to ban these workflows. It's to recognize that adding outside content into the loop changes the threat model and needs new controls. Most setups today don't include outside content in the loop. As workflows expand, more teams will hit this point, which is why understanding it now matters more than understanding it later.

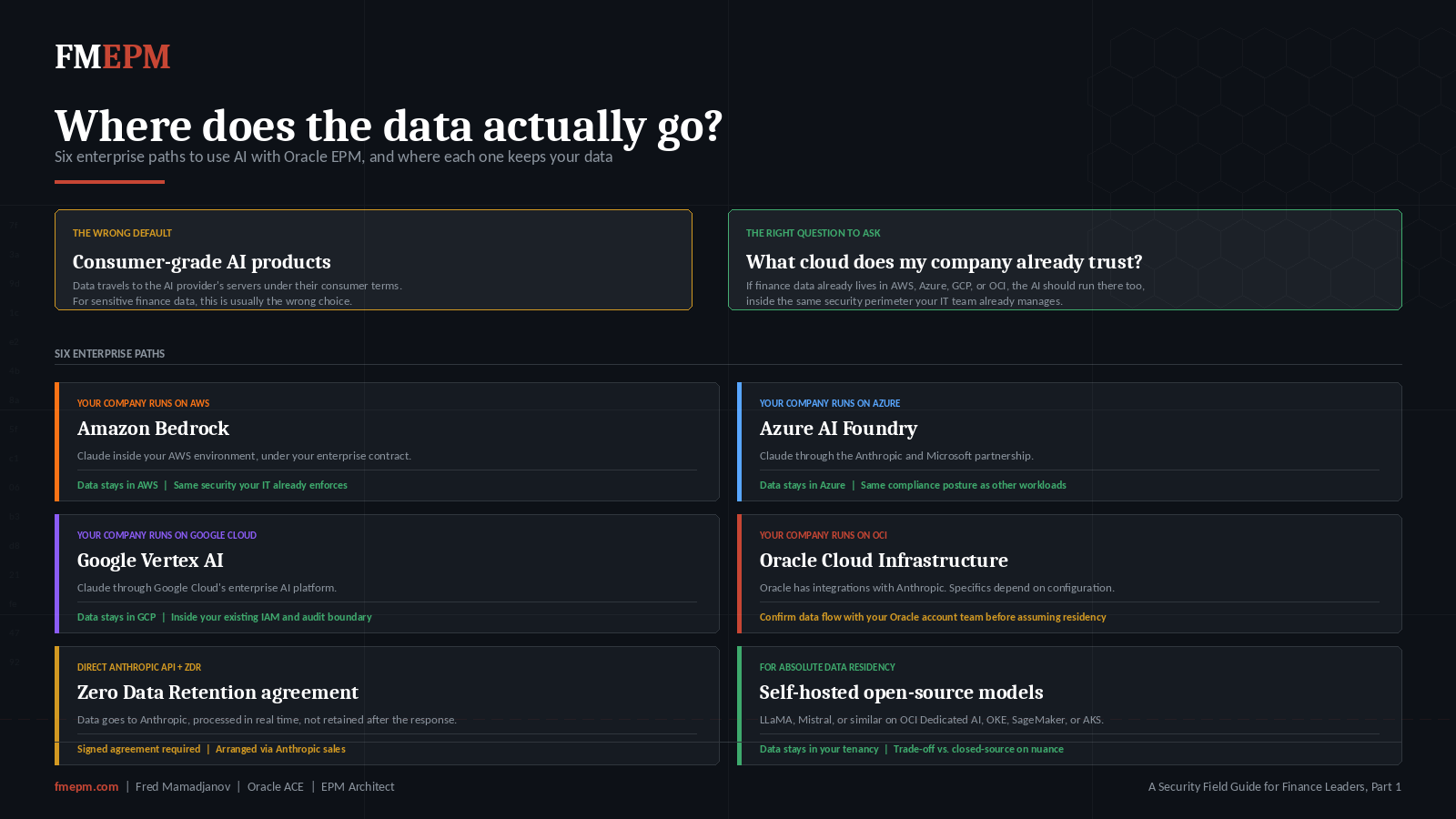

Risk 5: Where does the data actually go

This is the question I get most often from finance leaders, and it deserves a clear answer.

When the AI processes a request, the data has to go somewhere. With consumer-grade Claude or other public AI products, your data travels to the AI provider's servers. They process it. They send back the response. The terms of service describe what they do with it.

For finance teams, that's often the wrong choice for sensitive data. The right choice depends on what cloud the company already runs on:

On AWS: access Claude through Amazon Bedrock. Claude runs inside your AWS environment under your enterprise terms.

On Microsoft Azure: access Claude through Azure AI Foundry. Anthropic and Microsoft have a partnership that puts Claude in Azure Foundry for enterprise use.

On Google Cloud: access Claude through Google Vertex AI.

On Oracle Cloud Infrastructure: Oracle has integrations with Anthropic, but in some configurations the data still travels to Anthropic's API for processing. Check the specifics with your Oracle account team. There are also OCI-native options that run inside your tenancy with different model choices.

Direct Anthropic API with Zero Data Retention. For enterprises that want the latest Claude models without routing through a cloud vendor, Anthropic offers a Zero Data Retention (ZDR) agreement on their commercial API. Under ZDR, prompts and responses are processed in real time and not stored by Anthropic after the response is returned, except where required by law or to combat misuse. The data still travels to Anthropic's servers for processing. This is not the same as keeping data inside your own tenancy. That's the compromise. Data passes through Anthropic's infrastructure but isn't kept afterward. Whether that fits your data residency requirements is your call. ZDR requires a signed agreement and is arranged through Anthropic's sales team.

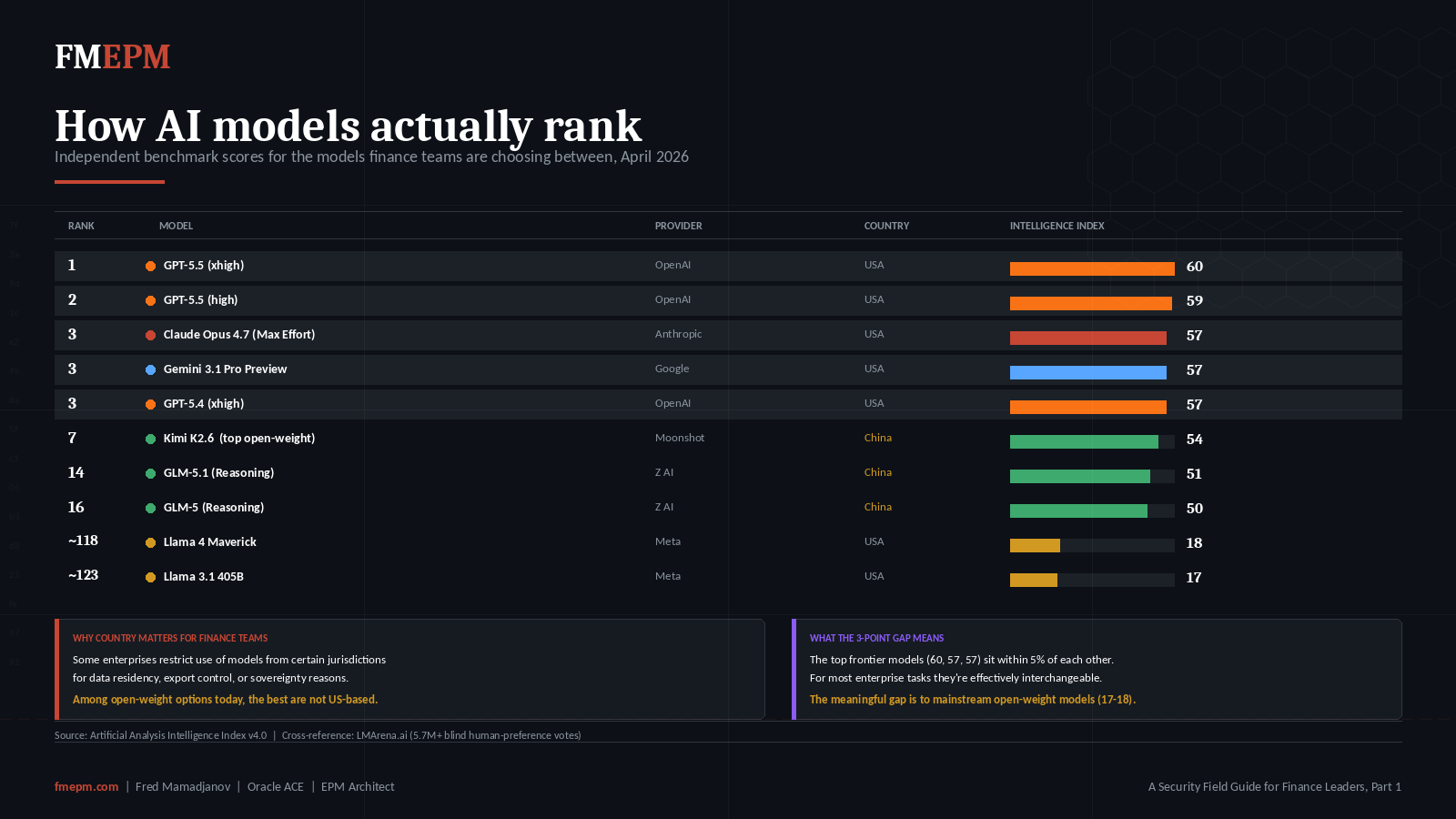

Self-hosted open-source models. A growing path for finance teams that want absolute data residency. Run an open-source model (LLaMA, Mistral, or similar) on compute inside your own tenancy. On OCI, that's a Dedicated AI Cluster, OCI Generative AI, or OKE compute. On AWS, that's SageMaker or self-managed compute. On Azure, that's AKS with your model of choice. The data never leaves your tenancy because the model itself is inside it.

Open-source models work well for structured tasks like REST API calls, summarization, and routine reporting. For deeper analytical work or nuanced commentary, the leading closed-source frontier models still score higher on independent reasoning benchmarks.

The data above pulls from the Artificial Analysis Intelligence Index, which aggregates ten reasoning evaluations including GPQA Diamond (graduate-level science), Humanity's Last Exam, and long-context reasoning over financial and legal documents. LMArena's human preference leaderboard confirms the same general ordering through 5.7 million blind preference votes. Self-hosting also adds operational work. Deployment, monitoring, fine-tuning, and version updates all become your engineering team's job. The right choice depends on what your AI assistant is actually doing. Some teams run a hybrid: a closed-source frontier model for analysis tasks, a self-hosted open-source model for structured automation.

For any finance team running on a major cloud, there's a path to AI that lives inside the security perimeter your IT team already manages. Same security policies. Same data residency. Same audit logging. Pick the path early, because moving the AI to a different cloud or model after the fact is much harder than picking the right one in the first place.

Designing the controls

What does the actual control set look like for an Oracle EPM AI integration?

It depends. The right design depends on which deployment option you're using, what your change management process already looks like, what your IT audit function expects to see, and which cloud your data lives in. There are six dimensions that need attention from day one. Authentication. Identity. Network access. Software supply chain. Audit trail. Human signoff on writes. Each one is simple to name and harder to get right.

This part of the work I'd rather do with you in your environment than spell out in an article.

The audit question that's coming

If your company is SOX-listed, SOC 2 audited, or governed by any IT general controls regime, your AI integration will surface in the next audit cycle.

Auditors will start asking:

- Who authorized the AI to access financial systems, and what role does it run as

- What change-management process governs updates to the AI's capabilities

- How are AI-initiated changes to financial data captured in the audit trail

- Who reviews those changes

- Are AI-initiated journal entries or business rule executions distinguishable from human ones in the log

Most teams running AI pilots today don't have clean answers yet. That's not a reason to stop. It's a reason to bring your IT audit and internal audit teams into the AI security conversation early, so the controls get designed alongside the integration rather than retrofitted after.

Where this leaves you

If you're a CFO or VP Finance and your team is exploring AI for close, planning, or reporting, three questions to put in front of the team building it:

- What authentication method is the AI integration using, and is MFA enforced on the underlying account?

- What Oracle EPM roles does the AI run as, and what specific permissions does it have?

- How do we trace what the AI did, including the prompt that led to each action?

If those three don't have clear answers yet, that's the work that needs to happen before the pilot connects to your production Oracle EPM environment.

What's next in this series

This is a Kaizen series. I'll keep updating it as the platform and the audit guidance evolve.

The next entries will cover Oracle EPM platform updates that shift the security picture, deeper work on layered audit trails, and whatever readers ask me to dig into.

The answers in this space move fast. Treat each part as v1.

A free architecture review

I'm offering free AI security architecture reviews for finance teams building Oracle EPM AI integrations right now. Bring your setup. We'll walk through it together, identify the questions you should be asking inside your own org, and I'll send a short written summary afterward.

Book a 30-Minute Discovery Call →Resources and Further Reading

GitHub Repository: github.com/fmepm/oracle-epm-mcp-server

Oracle OAuth 2 Documentation: REST API authentication with OAuth 2

Red Hat MCP Security Guide: Understanding security risks and controls in the Model Context Protocol

OX Security Research: The Mother of All AI Supply Chains

Anthropic Zero Data Retention: API and Data Retention Policies

Simon Willison on the Lethal Trifecta: simonwillison.net

Companion Articles: Connect Claude AI to Oracle EPM Cloud · Set Up an AI Agent for Oracle EPM

FMEPM · Fred Mamadjanov · Oracle ACE · EPM Solution Architect

This is not an Oracle product. Oracle EPM Cloud is a trademark of Oracle Corporation. Claude is a trademark of Anthropic PBC. This page describes a custom integration using Oracle's official, documented REST APIs. The lethal trifecta concept is credited to researcher Simon Willison.